API Observability In The Age of AI

Why does API observability matter in the age of AI?

API observability matters in the age of AI because LLMs, AI agents, and ML pipelines are multiplying API traffic, costs, and security risks faster than traditional monitoring can keep pace with.

What AI changes about API observability:

- Exponential growth in API call volume from AI agents and LLM integrations

- Unpredictable and fast-scaling cost patterns from token-based pricing and task-based token consumption

- New attack surfaces, including prompt injection and data exfiltration via APIs

- Regulatory pressure from the EU AI Act, NIST AI RMF, and sector-specific mandates

- Cross-service complexity as AI workflows chain dozens of API calls per task

Below, we explore how AI has fundamentally changed the API observability equation, from sprawl and cost control to security and competitive advantage, and what enterprise leaders need to do about it.

Every AI service your enterprise deploys, from chatbots and recommendation engines to fraud detection models and autonomous agents, runs on APIs. And as AI adoption accelerates, so does the complexity, cost, and risk of the API ecosystems powering it. The enterprises that don't get observability right in the AI era won't just fall behind. They'll be flying blind at machine speed.

In this article, we explore how AI has fundamentally changed the observability equation, from sprawl and cost control to security and competitive advantage, and what enterprise leaders need to do about it.

If you're new to API observability, our foundational guide covers what it is, how it differs from monitoring, and the three pillars (logs, metrics, traces).

How is AI changing the API landscape for enterprises?

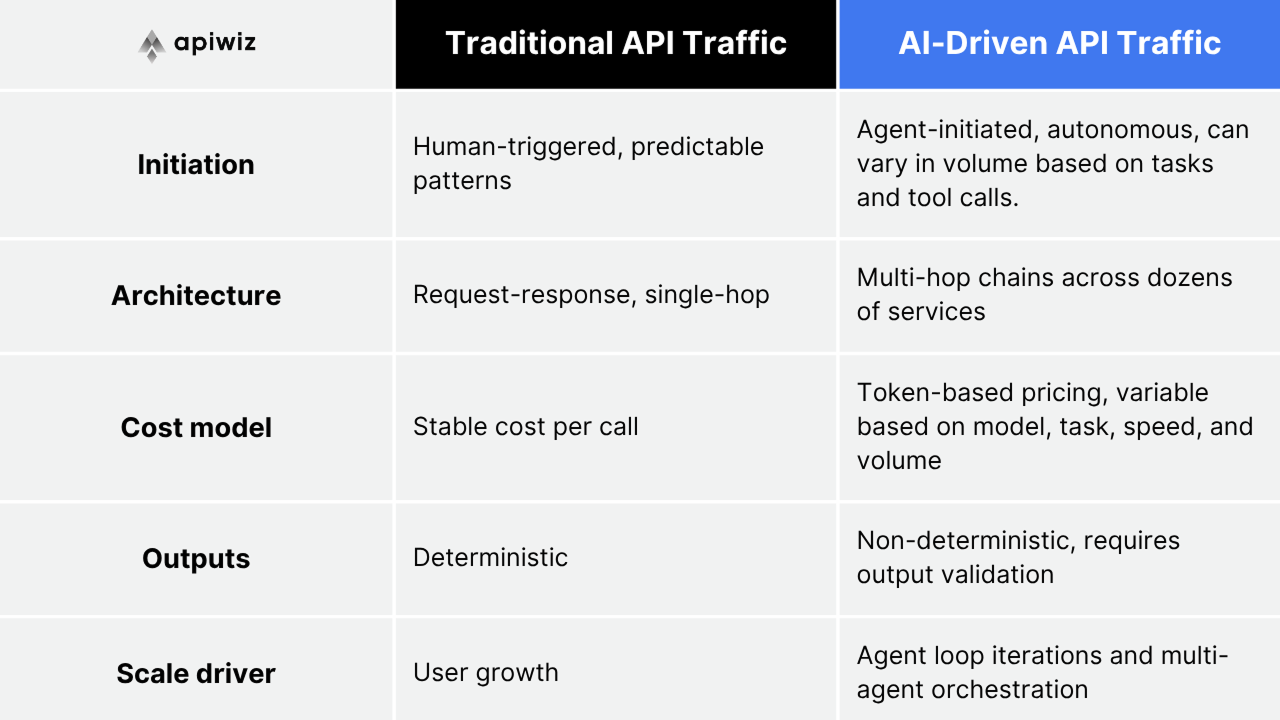

AI is transforming the API landscape by generating an unprecedented volume of API calls, introducing non-deterministic behavior patterns, and creating complex multi-service dependencies that enterprise architectures were never designed to handle.

The scale of the shift is staggering. Gartner predicts that more than 30% of the increase in demand for APIs will come from AI and LLM-based tools by 2026. By the end of 2026, 40% of enterprise applications will feature task-specific AI agents, up from less than 5% in 2025. And more than 80% of enterprises will have used generative AI APIs or deployed GenAI-enabled applications in production by 2026.

This staggering growth in API usage is hard to ignore. And, to effectively manage AI APIs, companies will need to view them from a new perspective, as these APIs differ from the traditional APIs they normally use.

The Postman 2025 State of the API Report confirms this shift is already underway: one in four developers now design APIs with AI agents in mind, and more than half the respondents have already deployed AI agents.

Why is API sprawl accelerating in the AI era?

AI is accelerating API sprawl because every AI capability an enterprise deploys requires its own set of API integrations. Chatbots, fraud detection, code generation: each one multiplies endpoints, dependencies, and data flows faster than any team can manually track.

Where the sprawl comes from:

- Each AI agent or copilot typically chains 5-15 API calls per task, including tool calls, retrieval endpoints, model APIs, and downstream service integrations

- Teams spin up new LLM integrations (OpenAI, Anthropic, Google, open-source models via Hugging Face) without centralized governance

- AI workflows create shadow API dependencies (model APIs, vector databases, embedding services, evaluation endpoints) that sit outside traditional API inventories

- Agentic architectures using protocols like Model Context Protocol (MCP) dynamically discover and invoke APIs, creating consumption patterns invisible to static registries

The governance gap is widening. In our previous article, we noted that less than 50% of enterprise APIs are actively managed (Gartner). AI compounds this problem by introducing a new class of API consumers (agents) that create, discover, and call endpoints autonomously.

Multi-agent systems further amplify the effect: early deployments report that multi-agent API call volume is 3-5x higher than single-agent equivalents, due to inter-agent communication, verification loops, and fallback chains.

Without observability, enterprises have no visibility into which AI services are calling which APIs, how often, or what data flows through them.

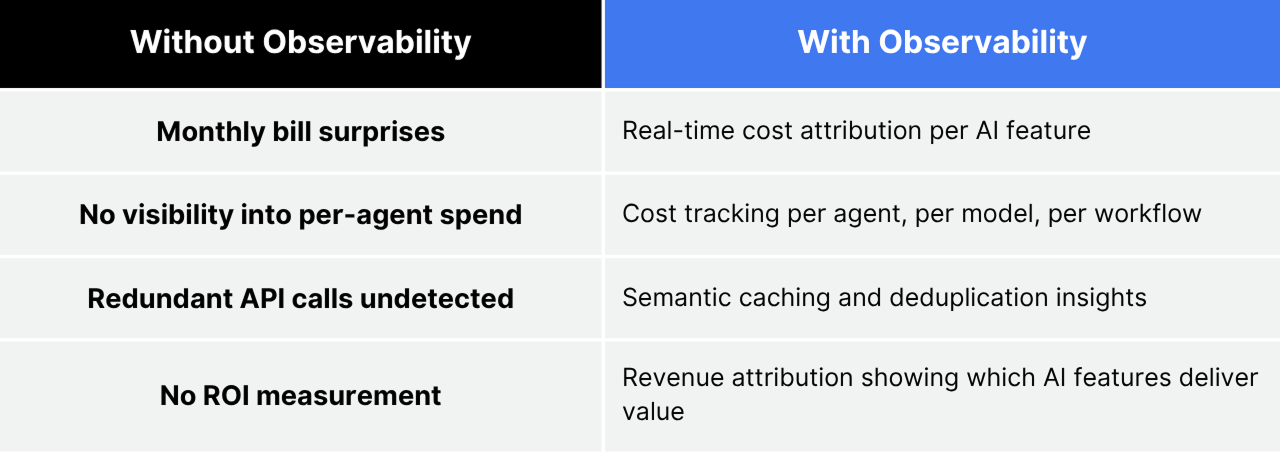

How do you control AI API costs without observability?

You don't. Without API observability, enterprises have no way to track which AI features consume the most resources, which LLM calls are redundant, or which agent workflows burn budget with zero business value.

The AI cost problem is structural:

- LLM API calls use token-based pricing that varies widely per request. A simple query might cost $0.001, while a complex agent loop can cost $5+ in a single execution

- According to Anthropic's engineering research, single-agent systems consume roughly 4x more tokens than chat interactions, while multi-agent systems consume approximately 15x more

- Agentic workflows compound costs at every layer: orchestration (re-reading system prompts and tool definitions on every loop), execution (per-call API fees for each tool invocation), and processing (feeding raw outputs back into context windows)

- Token bloat amplifies the problem further. Raw API responses can return thousands of tokens of noise for a few tokens of actionable data. Without observability, this waste is invisible

What observability changes:

In our first article, we established that observability provides CFOs with usage-based cost attribution at the API, team, and business unit levels. In the AI era, this capability becomes existential. AI services can rack up massive bills overnight if agent loops run unchecked. Without per-feature cost visibility, enterprises can't distinguish AI investments that deliver ROI from those that drain the budget.

What are the security and compliance risks of AI API Observability?

AI APIs introduce a new category of security risks that traditional API security controls were not designed to detect, and that regulators are now actively targeting. These include prompt injection, data exfiltration through model outputs, and unmonitored access to sensitive data.

New threat vectors from AI APIs:

- Prompt injection attacks that manipulate AI behavior through crafted API inputs

- Data exfiltration via model outputs, where AI systems leak training data, PII, or proprietary information through API responses

- Shadow AI integrations that bypass security review, with teams connecting to LLM APIs without IT approval

- Agent-initiated API calls bypassing human authorization controls

The Postman 2025 State of the API Report quantifies the concern: 51% of developers now cite unauthorized or excessive API calls from AI agents as their top security worry. Close behind, 49% are concerned about AI systems accessing sensitive data they shouldn’t see, and 46% worry about AI systems leaking API credentials.

Regulatory pressure is accelerating:

- EU AI Act general application and transparency requirements take effect August 2, 2026, requiring documented observability of AI system behavior, with fines up to 7% of annual revenue

- NIST AI Risk Management Framework (AI RMF) now provides the de facto standard for AI governance in the US

- Cyber insurers are introducing “AI Security Riders” that require documented evidence of AI-specific monitoring and red-teaming as prerequisites for coverage

- A coalition of 42 US state attorneys general has formed to target AI deployers, with enforcement actions increasing significantly through 2025

What observability gives security teams:

- Real-time visibility into which AI services access sensitive data via APIs

- Anomaly detection for unusual agent behavior, including unexpected call patterns, data access spikes, and authorization failures

- Compliance-ready audit trails mapping AI API activity to EU AI Act, NIST AI RMF, and sector-specific requirements

- Automated discovery of shadow AI API integrations before they become breach vectors

The security case we made in our previous article, that APIs are the #1 attack vector for enterprise web applications (Gartner), applies doubly to AI APIs. The attack surface is larger, the data is more sensitive, and the regulatory consequences are steeper.

How does API observability create a competitive advantage in the AI era?

Enterprises with mature API observability can move faster with AI. They experiment safely, scale confidently, and optimize costs. Those without it are stuck managing chaos, unable to distinguish AI investments that deliver ROI from those that drain budget.

The speed advantage. Observability lets teams deploy new AI features with confidence by enabling them to see the downstream API impact in real time: latency, error rates, cost, and data flows. When an AI experiment fails (and most do), observability shows exactly why: was it the model, the API integration, the data pipeline, or the infrastructure?

The optimization advantage. 84% of organizations plan to consolidate observability tools in 2026. The enterprises that do this first gain a unified view of AI and API performance that fragmented competitors can’t match. Developers who implement prompt optimization and semantic caching, both of which depend on observability data, see a significant reduction in token costs.

The trust advantage. Enterprises that can demonstrate observable, auditable AI systems win customer trust and regulatory approval faster. As AI regulation intensifies, observability becomes the evidence layer that proves compliance. It’s not a nice-to-have, but a market access requirement.

How should enterprise leaders approach API observability for the AI era?

Enterprise leaders should prioritize four capabilities: unified visibility across AI and traditional API traffic, cost attribution at the AI feature level, automated compliance monitoring for AI regulations, and real-time anomaly detection for agent behavior.

Priority 1: Unified observability across AI and traditional APIs

AI APIs don’t operate in isolation. They call other enterprise APIs, databases, and third-party services. Observability must span the entire chain, not just the LLM layer. Agent failures typically involve infrastructure, databases, or upstream services, requiring cross-signal correlation that’s impossible when telemetry is spread across separate tools.

Priority 2: AI-specific cost attribution

Move beyond aggregate API cost dashboards to per-model, per-agent, per-feature cost tracking. Implement token-level monitoring that ties LLM spend to specific business outcomes. This is how you answer the question every CFO is asking: “Which AI features are worth keeping?”

Priority 3: Compliance-ready AI audit trails

EU AI Act Article 13 requires “traceability” of high-risk AI system outputs. API observability provides the data layer. Build audit trails now, before enforcement deadlines hit in August 2026.

Priority 4: Agent behavior monitoring

As AI agents autonomously discover and invoke APIs, observability must detect unexpected patterns: unusual call volumes, unauthorized data access, and infinite retry loops that burn through your budget. Set hard cost and execution limits with real-time alerting.

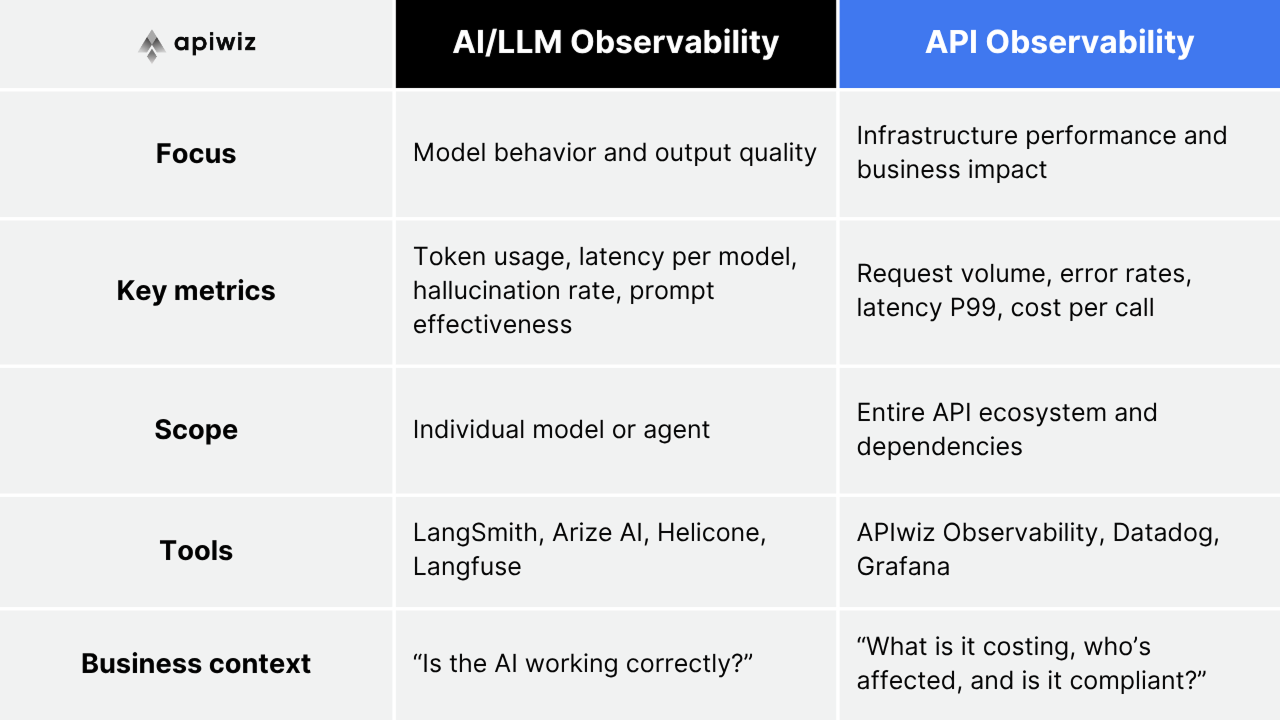

What is the difference between AI observability and API observability?

AI observability focuses on model-level behavior: prompt quality, hallucination detection, token usage, and output evaluation. API observability focuses on the infrastructure layer: latency, throughput, error rates, cost attribution, and security across the API ecosystem. Enterprises need both, working together.

AI observability tells you your model is hallucinating. API observability tells you that the hallucination is caused by a failing upstream data API returning stale results, that 200 customers are affected, that it’s costing $5K/hour in retries, and that it violates your SLA.

The distinction matters because the AI observability market (LangSmith, Arize, Helicone) focuses on model-level metrics. But model-level visibility without infrastructure-level visibility is only part of the picture. You can’t manage your AI if you can’t see your APIs.

How does APIwiz help enterprises observe AI-powered API ecosystems?

APIwiz Observability provides the unified API observability layer that AI-era enterprises need: real-time visibility across every API in your ecosystem, whether it’s serving an AI agent, a traditional application, or a partner integration. Built on eBPF technology, it delivers kernel-level tracing with near-zero performance overhead.

Key capabilities for AI-era observability:

- Zero-touch API discovery that automatically catalogs AI service integrations, shadow APIs, and agent-created endpoints

- Unified dashboard spanning AI APIs, traditional APIs, and multi-gateway deployments in one view, not five tools

- Cost attribution per API, per team, per business unit, including AI-specific spend tracking

- Real-time anomaly detection for unusual API consumption patterns from AI agents

- Structured API logging capturing request/response payloads, headers, and metadata across AI and traditional API traffic for debugging, forensics, and compliance

- End-to-end distributed tracing across AI workflows, from agent request to downstream API response

- Compliance-ready audit trails for EU AI Act, NIST AI RMF, and sector-specific regulatory requirements

- eBPF-powered deep packet inspection without agent overhead, eliminating blind spots and performance drag

Enterprise customers like RCBC and Tonik use APIwiz to manage thousands of APIs with full lifecycle observability. That same foundation makes AI adoption observable, auditable, and cost-controlled.

Key takeaways

API observability was already a governance, cost, and security imperative, as we established in the first article in this series. AI has raised the stakes exponentially. Every AI agent, every LLM integration, every autonomous workflow multiplies your API footprint, your cost exposure, and your compliance obligations. The enterprises that invest in observable AI infrastructure now are the ones that will scale AI confidently while their competitors are still trying to figure out why their cloud bill tripled overnight.

In the next post in this series, we’ll show how APIwiz brings all of this together, from API chaos to clarity, with a unified platform for enterprise API observability.

Book a Demo → See how APIwiz delivers full-lifecycle API observability for AI-era enterprises, across any gateway, any cloud.

FAQs about API observability and AI

1. Why is API observability more important now because of AI?

AI services generate exponentially more API calls than traditional applications. A single AI agent can chain 5-15 API calls per task, and agentic workflows run autonomously, creating traffic patterns, cost spikes, and security exposures that are invisible without observability. Gartner predicts that more than 30% of the increase in API demand will come from AI and LLMs by 2026, making observability the only way to maintain visibility at this scale.

2. How does AI affect API costs for enterprises?

AI introduces unpredictable, token-based API costs that vary dramatically per request. LLM API calls can range from fractions of a cent to several dollars, depending on model, input length, and output complexity. Without observability, enterprises can’t attribute AI costs to specific features, teams, or business outcomes, leading to budget overruns and an inability to measure AI ROI. Infrastructure and integration overhead typically add 20-40% to direct API spend.

3. What new security risks do AI APIs create?

AI APIs introduce novel attack vectors that traditional API security doesn’t cover: prompt injection attacks that manipulate AI behavior via crafted inputs; data exfiltration via model outputs that leak PII or proprietary information; and shadow AI integrations in which teams connect to LLM providers without IT approval. The Postman 2025 State of the API Report found that 51% of developers cite unauthorized AI agent API calls as their top security concern.

4. What is the difference between AI observability and API observability?

AI observability monitors model-level behavior: prompt quality, hallucination detection, token usage, and output evaluation. API observability monitors the infrastructure layer: latency, throughput, error rates, cost attribution, and security across the entire API ecosystem. They’re complementary: AI observability tells you a model is underperforming, API observability tells you why (a failing upstream data API), who’s affected, and what it’s costing.

5. How should enterprises prepare their API observability for AI adoption?

Start with unified visibility. Ensure your observability platform spans both AI APIs and traditional enterprise APIs in a single view. Implement cost attribution at the AI feature level to track spend by model, agent, and workflow. Build compliance-ready audit trails before EU AI Act enforcement deadlines hit in August 2026. And deploy real-time anomaly detection for agent behavior, including hard cost limits and execution caps to prevent runaway AI workflows.

6. What is an LLM API gateway, and how does it relate to observability?

An LLM API gateway sits between your applications and LLM providers (OpenAI, Anthropic, Google), providing a unified interface with automatic failover, rate limiting, and cost controls. It complements API observability by centralizing AI traffic management, but it doesn’t replace observability. An LLM gateway routes and controls traffic; API observability provides the end-to-end visibility into what that traffic is doing, what it’s costing, and whether it’s compliant.

Effortless API Management at scale.

Support existing investments & retain context across runtimes.

.png)

Effortless API Management at scale.

Support existing investments & retain context across runtimes.

.png)